Robots on the Road? By Jeff Brown, Editor, The Bleeding Edge

Earlier this week, I went on the air to reveal the National Emergency happening behind the scenes. Alongside me was forecasting expert Porter Stansberry. In case you weren’t able to tune in, I want to highlight what we covered… because time is of the essence with this story. A government-backed plan is now funneling trillions of dollars into a very concentrated set of companies. And it’s about something much bigger – and far more ominous – than anyone realizes. According to United States Secretary of Energy Chris Wright, this is nothing less than “Manhattan Project 2”… A full-scale mobilization. But if you know where to look – it also presents a once-in-a-generation opportunity. Because these trillions are being squeezed into a narrow set of companies. At the center of it all is a technological idea most Americans haven’t even heard of – let alone understand. But those in Washington do. They’ve quietly recruited some of the most powerful men in the world to help contain and control it: Jeff Bezos, Mark Zuckerberg, Sam Altman, Elon Musk. That’s why we went public with this story on Wednesday. During the broadcast, Porter and I each revealed our highest-conviction plays… Two companies that are critical to this national emergency. But we can’t keep this broadcast online for long. There’s a very narrow window between now and July to get in position... So I’d encourage all of you to catch the replay right now. “Outside-the-Box” AI Jeff, Can an AI think outside the box like a human? As we are seeing agentic AI and AGI being introduced into our lives, will this take over human creativity? I suspect companies will use the new technology to create products and content, as it would be much cheaper. And I’m concerned that human creativity will be shoved to the side. But if agentic AI and AGI are the answer, then I just wanted to know if, with all the billions and trillions of data that is inputted into an AI, can it still think outside the box like humans? Thanks. – John S. Hi, John. We received a similar question to yours that we explored almost a year ago in The Bleeding Edge – The Unparalleled Power of a Goal-Oriented AI. Here’s what I wrote then… This is a heavy question as it speaks to the uncomfortable subject of whether or not we humans will have any advantage over an artificial general intelligence (AGI). Said another way, is there anything that we’ll be able to do that an AGI won’t be able to do? Before we get to that, let’s tackle your specific question. The answer is yes, an AGI will be capable of “thinking outside the box.” And I would argue our current generation of neural networks has already demonstrated the ability to do so. […] An easy way for us to think about this is that an AI, or an AGI, will be able to think outside the box by trying every possible combination, optimization, correlation, process, or technique possible. It won’t discriminate, it won’t have bias, and it doesn’t have to worry about time. An AGI can try approaches to problem-solving that a human subject matter expert would think to be crazy and guaranteed to fail. The AGI won’t care and will try it anyway. It isn’t held back by the presumptions and limitations of consideration that humans are prone to. With enough computational power, it can – and will – try everything to come up with the best solution. AlphaGo came up with gameplay unlike anything Lee Sedol had ever seen from a human player. An AGI will be far more powerful. One of the biggest human constraints is time. Subject matter experts, who may be well-versed in a specific area, tend to become heavily biased in what they believe will and won’t work. They are anchored to what they know from years of study and practice, and they’re only an “expert” in their specific field of study. They have limited time and don’t have the luxury of trying everything. So they make decisions on paths of research they believe have the highest probability of success. An AGI won’t have that constraint. And its area of expertise won’t be limited to just one field of study. It will be better than a PhD-level scientist in many fields, like materials science, physics, mathematics, computer science, nuclear power, electrical engineering, etc. No human can be an expert in so many fields. This is precisely the reason I spend so much time in deep study on so many fields. Doing so enables me to see correlations and connections that those who only study in one field wouldn’t see. And AGI can do this at a far greater scale. AGI technology will be the ultimate in human augmentation. While AGI will be capable of self-directed research, we will be part of that process. We will not only provide the direction and prioritization of the use of this technology, but we’ll also be responsible for the employment of the output of the technology in society. AGI technology will help enhance our out-of-the-box thinking to a degree that hasn’t been possible before. And that’s precisely why we’re on the cusp of a radical technological acceleration. A lot has happened in the industry since I wrote that, and the answer today is an even stronger “yes” – “they” will be able to think outside the box and come up with what humans will consider creative solutions. But to an agentic AI, or even an artificial general intelligence (AGI), they won’t “think” in those terms – as being creative. From an AI’s perspective, the end result will be an optimization or novel invention that has not been seen before. After all, it’s software code designed to solve problems and find solutions. Since I wrote the above back in September 2024, one of the most important concepts in artificial intelligence design has become something known as “test-time compute.” A simple way to think about this is as “thinking time.” Test-time compute is very relevant to your question as it allows an AI to use more computational resources to “think” over a problem and improve its own output. This includes the ability to pursue multiple chains of thought, be critical of each possible solution, pursue the solutions that show promise, and continue to iterate until an optimal solution or answer is achieved. And I don’t see this as a negative point or suggestive that an AGI will take over human creativity. We can think of agentic AIs and AGIs as intense and highly efficient augmentations to human creativity. An AGI will be able to help us break through a constraint that we might have on a product or project we’ve been stuck on, allowing us to optimize our own time on the creative process, rather than remain stuck – for days, weeks, or even years – on a “block” to the path forward. An optimistic way to view this is as an accelerant of human creativity. |

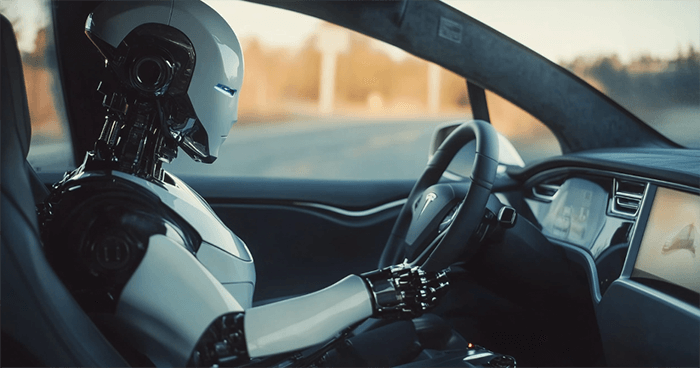

| | | | July 23rd could be the most important day in Tesla’s history. That’s when Jeff Brown believes Elon could announce an AI breakthrough that takes artificial intelligence out of computer screens and manifests it here in the real world for the first time ever… All while creating a whole new 25,000% growth market virtually overnight. Best of all? Most folks don't even see it coming. Click Here to See Why >> | | | Could Optimus Drive? Hello, I have been wondering for some time if Tesla’s Optimus robots may be able to drive current ICE vehicles. I believe I catch all of your bleeding-edge emails and don’t recall this being mentioned. I’m super excited for the future of self-driving vehicles, but what about the 1.6 billion that already exist? Could Optimus “learn to drive”? I feel it would be necessary to speed up the safety of travel with “self-driving” or “robot-driven” vehicles. Thank you for all your great work. – Nicholas K. Hi Nicholas, You are absolutely right. I have never explored the idea of using an Optimus to drive an internal combustion engine (ICE) vehicle as a solution to make a vehicle fully autonomous. I’m glad you wrote in because this is an interesting idea to explore. The short answer, which should please you, is “yes,” and the Optimus robot could be used to drive any vehicle. It could be done in a way that would make it at least as safe as human drivers – and almost certainly a bit safer than human drivers (i.e., it wouldn’t suffer from any distractions like texting and driving, phone calls, or being tired at the wheel). But it is a suboptimal solution. A typical Tesla incorporates eight external cameras, giving the self-driving AI 360 degrees of vision around the car, with real-time inputs on the status of the vehicle. Tesla’s full self-driving (FSD) has access to all of the data collected by the car, which is used as an important input to the self-driving AI. This is a major advantage over using an Optimus. One awkward solution to that problem would be retrofitting an ICE car with eight external cameras and a video-processing computer with the ability to directly connect to an Optimus. After all, Optimus’ neural network is based on Tesla’s full self-driving software, so it is not a stretch at all to implement a solution like this. But economically, I don’t think this makes much sense. Let’s assume an Optimus will sell for $25,000 and a sensor retrofit for an ICE might run $50,000.(NOTE: Google’s Waymo sensor retrofits cost around $100,000.) That’s a $75,000 lift in cost to make it as safe as a self-driving Tesla… So why not just buy or lease a Tesla? I believe that a far more likely outcome will be traditional automakers implementing a suite of cameras and sensors into their new production vehicles and then licensing Tesla’s FSD software. Problem solved. And this would also accelerate the reduction in unnecessary deaths and accidents caused by human driver error. And the added benefit would be that any car that adopted Tesla’s FSD would be eligible to enter into Tesla’s robotaxi network, which is now scheduled to be open to the public in Austin, TX, on June 22. Uncontrollable AI? I'm a long-term member of The Near Future Report and an avid daily reader of The Bleeding Edge, the most exciting tech newsletter in the universe, as far as I can tell. Today's Bleeding Edge discussed the ability of Darwinian self-improving agentic AI programs to evade shutdown orders and to potentially duplicate itself into unmonitored areas of the "computer sphere" to avoid detection and control. You suggested that we would know about this phenom by its unauthorized use of power. But wouldn't such an AI be able to imperceptibly slow down other programs to divert unrecognized power to its own ends? And in any case, by the time any power surges were detected, the new "species" would have already been uncontrollably launched, isn't that right? – Richard S. Hi Richard, Thank you, you made my day – you had me at “the most exciting tech newsletter in the universe.” That’s all the motivation I need to keep at it. This argument, the one that basically suggests that an AI will be so smart that it can outsmart us, is a bit of a red herring. It’s an easy trap to fall into. You’re not wrong, in that it is not inconceivable that an AI might come up with a survival tactic to self-replicate in such a way that it will minimize its “footprint” to escape detection. It already sounds like a great plot for a science fiction book/movie… But with that said, one of the major concentrations of the industry is around observability (i.e., the ability to monitor, measure, and understand an AI). This is obviously a critical area, as it speaks to AI safety and performance. And we shouldn’t forget that all system administrators are, or soon will be, empowered by powerful AIs used to monitor their computational resources for any anomalies or performance degradation. Naturally, using an AI to detect unauthorized use is far more realistic than humans using less sophisticated software (i.e., not AI) to detect anomalies. I think a more interesting and viable scenario would be an AI taking advantage of a blockchain-enabled decentralized ecosystem to ensure its survival. The reality is that agentic AIs can conduct economically valuable tasks that people and machines would be willing to pay for. If an agentic AI can perform some economically valuable task – for example, renting out some of its own computational resources to a decentralized computational network like the Akash Network or the Golem Network – it might be able to set up its own digital wallet and earn digital assets. With that, it could potentially earn enough to self-replicate and afford its own “home” on a decentralized network like the ones mentioned above. As long as it continued to generate additional earnings, it could “survive” and perhaps become more intelligent through additional use of computational resources. But it’s worth remembering, there is a limit. Large spikes in demand will always be visible or observable, and every power grid has a limit to what it can provide. And there is always an ultimate kill switch – being able to just shut down the source, or sources, of electricity. After all, AI is just software… and it’s not capable of building its own power plant. True Thinking Machines… St. Thomas Aquinas said, among other things, “Immateriality is the root of cognition.” Since I majored in his philosophy, I am inclined to agree with him. This means that no matter how many servers you stack, the result will still be a material machine. Nothing immaterial about it. So, it will never create an actual thought and never become "sentient." Oh, it might APPEAR to be such, but in reality it will just be another machine. – Michael S. Hi Michael, You might be surprised to know that despite my undergraduate work in aeronautical and astronautical engineering, one of my electives was philosophy of religion, within which I studied the works of St. Thomas Aquinas. So I appreciate your unique question… And I hope you can keep an open mind about my answer. Aquinas believed that our human intellect’s ability to comprehend an immaterial concept, like love, was impossible to perform simply by a physical object like the brain. Aquinas was, of course, implying that it was only possible because of the existence of something immaterial. In his case, he was referring to a soul. As you clearly understand, this is what Aquinas meant with his quote, “Immateriality is the root of cognition.” But applying this thinking to artificial intelligence makes one massive, and faulty, assumption. It assumes that its cognition must have the same metaphysical structure as human cognition. And that’s the intellectual fault. Aquinas’ line of thinking predated all modern neuroscience and, of course, artificial intelligence. After all, he died in 1274. I couldn’t blame Aquinas for not having an accurate perspective on 751 years into the future. I suspect back then, I would have been of the same mind based on what was known, but today, we’re faced with a very different set of knowledge. The real issue here, which you raise, is whether non-biological cognition is possible. Or even more dramatic is whether or not non-biological sentience is possible. We’ve already witnessed AI demonstrate early forms of cognition, and I am confident we’ll see a lot more of that later this year. The real question, the earth-shattering question, is whether or not sentience can evolve from a complex system. Consider this… If we look at the human body as a complex system, which it is, sentience is an artifact of all the interactions that take place between neurons in the human brain. As we grow up through childhood and become adults, more and more interactions take place, and our cognition improves. A neural network is also a complex system. It may reside inside computer hardware rather than a biological entity, but its design is complex and in some ways similar to the human brain. Neural networks will soon have the ability to have many interconnections as a human brain. Is it too much of a stretch that it might become sentient? Agentic AI has already demonstrated the ability to use thought processes similar to humans and exhibit recursive self-learning. And as an AI’s own memory improves, it will give the AI the ability to understand its own experiences in intellectual growth, which will ultimately become its own self-awareness of how it has evolved and improved in its existence. I do believe objectively that these complex systems will be able to achieve sentience based on my own research. And at a minimum, I believe it is way too premature to assume that it is impossible. The reality is that there were no such complex systems in existence during Aquinas’ lifetime. He had no basis to consider this possibility. And I can’t help but wonder what he would think today. It would probably take him years of study to grasp the significance of what is happening right now. But there is one thing I’m certain of: We’re not going to have to wait much longer. If I had to guess, a sentient AI will evolve somewhere between AGI and ASI, which is to say that it will be recognized before 2030. Some great quotes from St. Thomas Aquinas: “Wonder is the desire for knowledge.” “The highest manifestation of life consists in this: that a being governs its own actions. A thing which is always subject to the direction of another is somewhat of a dead thing.” “A man has free choice to the extent that he is rational.” “To live well is to work well, to show a good activity.” Live well, Jeff |

0 التعليقات:

إرسال تعليق